Internals¶

Directory Structure¶

The Constellation IaC repository uses the Apps-of-Apps Pattern for cluster bootstrapping by applying a root ArgoCD application that subsequently syncs all other applications.

The directory structure is as follows:

> cd applications

> tree -d

.

├── argocd # ArgoCD apps

│ ├── dev

│ ├── prod

│ └── sandbox

├── external # Internet-facing apps

│ └── <app-name> # Application directory

│ ├── base # Base manifests

│ └── overlays # Kustomize overlays

│ ├── sandbox # Sandbox overlays

│ ├── dev # Dev overlays

│ └── prod # Prod overlays

├── internal # ClearRoute internal-facing apps

│ └── <app-name> # Application directory

│ ├── base # Base manifests

│ └── overlays # Kustomize overlays

│ ├── sandbox # Sandbox overlays

│ ├── dev # Dev overlays

│ └── prod # Prod overlays

└── management # Management applications (needed for cluster operations)

├── cert-manager # Helm values and manifests for cert-manager

├── cluster-autoscaler

├── external-dns

├── external-secrets

├── kube-prometheus-stack

└── traefik

Infrastructure as Code (IaC)¶

Terraform is used to provision infrastructure on AWS.

We make extensive use of upstream modules, such as github.com/terraform-aws-modules.

All Terraform files are located in the infra directory:

> tree -d

.

├── bootstrap

├── clusters # tfvar files per cluster

├── files # static files (templates, ...)

├── vars # common tfvar files

└── *.tf # Terraform files

Provisioning a New Cluster¶

To provision a new or existing cluster, add a corresponding ./clusters/<cluster-name>.tfvars file.

The following variables are supported:

# infra/clusters/dev.tfvars

region = "ap-southeast-2"

env = "dev"

eks = {

kubernetes_version = "1.33"

cidr = "10.0.0.0/16"

min_size = 2

max_size = 10

instance_types = ["t3.medium"]

}

route53 = {

create_hosted_zone = true

tld = "clearroute.io"

zone_name = "dev"

}

argocd = {

enabled = true

apply_root_app = false

}

access = {

"constellation_admin" = {

principal_arn = "arn:aws:iam::799468650620:role/aws-reserved/sso.amazonaws.com/eu-west-1/AWSReservedSSO_ConstellationAdmin_64b9f879eabd03dc"

policies = {

"cluster_admin" = {

policy_arn = "arn:aws:eks::aws:cluster-access-policy/AmazonEKSClusterAdminPolicy"

access_scope = "cluster"

}

}

}

"constellation_engineering" = {

principal_arn = "arn:aws:iam::799468650620:role/aws-reserved/sso.amazonaws.com/eu-west-1/AWSReservedSSO_ConstellationEngineering_9dda2a0a0849d418"

policies = {

"read_only" = {

policy_arn = "arn:aws:eks::aws:cluster-access-policy/AmazonEKSAdminViewPolicy"

access_scope = "cluster"

}

}

}

}

rds = {

enabled = true

engine = "postgres"

version = "17"

family = "postgres17"

port = 5432

instances = 1

instance_class = "db.t3.medium"

allocated_storage = 5

}

irsa = {

forge = {

namespace = "*"

service_account_name = "forge"

iam_policy = {

"ECRDescribeImages" = {

actions = [

"ecr:DescribeImages",

"secretsmanager:DescribeSecret"

]

resources = ["*"]

}

}

sub_condition = "StringLike"

}

cilium = {

namespace = "kube-system"

service_account_name = "cilium-operator"

iam_policy = {

"CiliumENIAndPrefixDelegation" = {

actions = [

"ec2:*"

]

resources = ["*"]

}

}

}

grafana_cloudwatch = {

namespace = "monitoring"

service_account_name = "kube-prometheus-stack-grafana"

iam_policy = {

# https://github.com/monitoringartist/grafana-aws-cloudwatch-dashboards?tab=readme-ov-file

"AWSBilling" = {

actions = [

"cloudwatch:ListMetrics",

"cloudwatch:GetMetricStatistics",

"cloudwatch:GetMetricData",

"logs:DescribeLogGroups",

"logs:DescribeLogStreams",

"logs:GetLogEvents",

"logs:FilterLogEvents"

]

resources = ["*"]

}

}

}

external_secrets_operator = {

namespace = "external-secrets"

service_account_name = "external-secrets"

iam_policy = {

"ExternalSecretsOperatorAll" = {

actions = [

"secretsmanager:ListSecrets",

"secretsmanager:BatchGetSecretValue",

"secretsmanager:GetResourcePolicy",

"secretsmanager:GetSecretValue",

"secretsmanager:DescribeSecret",

"secretsmanager:ListSecretVersionIds",

]

resources = ["*"]

}

"Renovate" = {

actions = [

"ecr:GetAuthorizationToken",

"ecr:BatchCheckLayerAvailability",

"ecr:GetDownloadUrlForLayer",

"ecr:BatchGetImage",

"ecr:DescribeImages",

"ecr:DescribeRepositories",

"ecr:ListImages"

]

resources = ["*"]

}

}

}

comply-backend = {

namespace = "app-comply"

service_account_name = "comply-backend"

iam_policy = {

"S3Access" = {

actions = [

"s3:GetObject",

"s3:GetObjectVersion",

"s3:PutObject",

"s3:PutObjectTagging",

"s3:DeleteObject",

"s3:ListBucket",

"s3:ListBucketVersions"

]

resources = [

"arn:aws:s3:::comply-policies-dev",

"arn:aws:s3:::comply-policies-dev/*"

]

}

}

},

comply-backend-preview = {

namespace = "preview-app-internal-comply-pr-*"

sub_condition = "StringLike"

service_account_name = "comply-backend"

iam_policy = {

"S3Access" = {

actions = [

"s3:GetObject",

"s3:GetObjectVersion",

"s3:PutObject",

"s3:PutObjectTagging",

"s3:DeleteObject",

"s3:ListBucket",

"s3:ListBucketVersions"

]

resources = [

"arn:aws:s3:::comply-policies-dev",

"arn:aws:s3:::comply-policies-dev/*"

]

}

}

}

}

irsa_clearroute_account = {

external_dns = {

namespace = "external-dns"

service_account_name = "external-dns"

iam_policy = {

# https://kubernetes-sigs.github.io/external-dns/latest/docs/tutorials/aws/#iam-policy

"ChangeResourceRecordSets" = {

actions = ["route53:ChangeResourceRecordSets"]

resources = ["arn:aws:route53:::hostedzone/*"]

}

"Route53Records" = {

actions = [

"route53:ListHostedZones",

"route53:ListResourceRecordSets",

"route53:ListTagsForResources"

]

resources = ["*"]

}

}

}

cert_manager = {

namespace = "cert-manager"

service_account_name = "cert-manager"

iam_policy = {

"ChangeResourceRecordSets" = {

actions = [

"route53:ChangeResourceRecordSets",

"route53:ListResourceRecordSets"

]

resources = ["arn:aws:route53:::hostedzone/*"]

conditions = {

"ForAllValues:StringEquals" = {

variable = "route53:ChangeResourceRecordSetsRecordTypes"

values = ["TXT"]

}

}

}

"ListHostedZones" = {

actions = [

"route53:ListHostedZones",

"route53:ListHostedZonesByName",

"route53:GetChange",

"route53:GetHostedZone",

]

resources = ["*"]

}

}

}

}

When submitting and merging a PR, the GitHub Action pipelines will automatically pick up any changes and apply them.

Cluster provisioning includes:

- VPC

- EKS

- RDS

- App-specific RDS DB credentials for each app in AWS Secrets Manager

- IAM settings (especially IRSA)

- Route53 settings

- ECRs for each app (if the app is a

github.com/clear-routerepository)

Furthermore, ArgoCD will be installed on the EKS cluster using helm.

Bridging Terraform to ArgoCD¶

We aim to maintain a clear separation between Terraform-managed resources and Kubernetes (EKS) managed resources. We strictly avoid managing Kubernetes manifests or Helm Charts with Terraform, as the Kubernetes & ArgoCD reconciliation loop conflicts with Terraform's ad-hoc driven approach.

However, ArgoCD sometimes requires values that are managed by Terraform. This gap is bridged using the GitOps bridge pattern.

To securely pass computed values to ArgoCD, an ArgoCD Cluster Secret is created during cluster provisioning. This secret contains all required Terraform values as annotations. These annotations can then be consumed in ArgoCD via an ApplicationSet of type Cluster Generator using {{ metadata.annotation.<annotation>}}.

Here is a short example of the ExternalDNS ArgoCD App:

# applications/argocd/dev/external-dns.yaml

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

metadata:

name: external-dns

namespace: argocd

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

generators:

- clusters:

selector:

matchLabels:

argocd.argoproj.io/secret-type: cluster

template:

metadata:

name: external-dns

spec:

project: default

sources:

- repoURL: https://kubernetes-sigs.github.io/external-dns/

chart: external-dns

targetRevision: 1.20.0

helm:

values: |

provider:

name: aws

txtOwnerId: {{ metadata.annotations.cluster_name }}

domainFilters:

- {{ metadata.annotations.cluster_name }}.{{ metadata.annotations.tld }}

env:

- name: AWS_DEFAULT_REGION

value: {{ metadata.annotations.region }}

tolerations:

- key: workload-tier

operator: Equal

effect: NoSchedule

value: baseline

nodeSelector:

workload-tier: baseline

serviceAccount:

annotations:

eks.amazonaws.com/role-arn: {{ metadata.annotations.external_dns_role_arn }}

destination:

server: https://kubernetes.default.svc

namespace: external-dns

syncPolicy:

syncOptions:

- CreateNamespace=true

- SkipDryRunOnMissingResource=true

automated:

prune: true

selfHeal: true

retry:

limit: 5

backoff:

duration: 5s

maxDuration: 3m0s

factor: 2

# applications/argocd/root-argocd-app.yml

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

metadata:

name: root-app

namespace: argocd

annotations:

argocd-diff-preview/ignore: "true"

spec:

generators:

- clusters:

selector:

matchLabels:

argocd.argoproj.io/secret-type: cluster

template:

metadata:

name: root-argocd-{{metadata.annotations.cluster_name}}

spec:

project: default

source:

repoURL: https://github.com/clear-route/constellation-iac.git

targetRevision: main

path: "applications/argocd/{{metadata.annotations.cluster_name}}"

destination:

server: https://kubernetes.default.svc

namespace: argocd

syncPolicy:

automated:

prune: true

selfHeal: true

retry:

limit: 5

backoff:

duration: 5s

maxDuration: 3m0s

factor: 2

GitOps¶

ArgoCD¶

argocd CLI¶

Tip

Download the ArgoCD CLI from here

Run the following command to authenticate to Constellation using the argocd CLI:

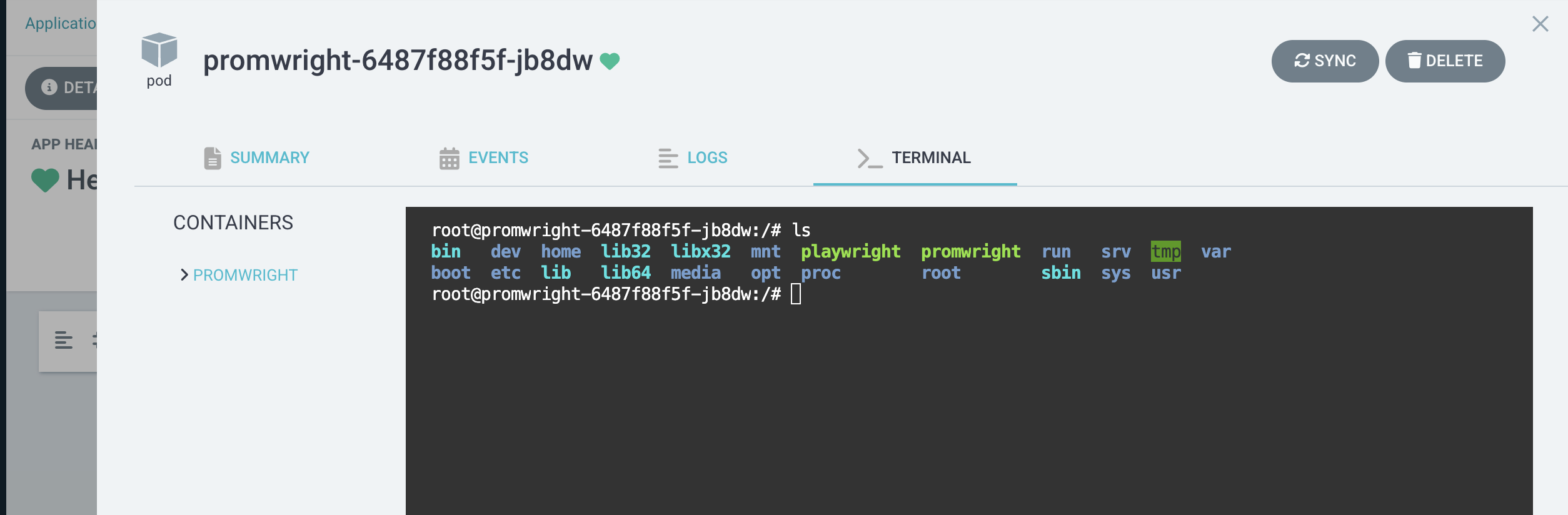

Web Terminal¶

Note

This might not work in Safari.

On dev and sandbox, the Web-based Terminal is enabled. This allows you to run arbitrary commands in any container, which is especially useful for troubleshooting: